So I used to draw pictures. I have never been a talented artist, but that wasn't because I didn't enjoy it or wasn't creative enough (it had more to do with poor fine motor control). Eventually, in the 90s, I discovered digital art, which helped me to overcome some of the fiddly issues with getting my fingers to do exactly what they are supposed to (at least I had an undo button).

I had fun with digital art for a long time. I've owned several convertible tablet/laptops with digitizers for drawing on the screen. These were great for making a quick sketch to illustrate a point, or to throw together a quick character doodle after rolling stats. The most recent incarnation of this hardware is 'Achoo', a Thinkpad X1 Yoga (Gen 7). This laptop has a screen that backflips backwards, vs the spin-and-close convertibles I used previously. I'll be honest, I kinda hate it. As such, I never really got back into the habit of doing art on this machine. That is actually okay, because shortly after I purchased this laptop, Stable Diffusion became a thing, and AI art was available to anyone with the hardware to run it.

Automated Art

At this point, I was learning how to write prompts that would generate the images I wanted. I did this for awhile, learned how to train a model on pictures of my family, made a few calendars, gave my folks an album of vacation photos with my kids that never happened. It was fun. I was making art, but I wasn't really doing the work of painting or sketching. My input reduced to just writing prompts.

Now, when I needed a new image, I could make it with just a few words. No time consuming pixel-tight selections, no more masking or fussing with layers. Unless I was doing something unique, advanced, or fancy, I could use the tool I felt came the most naturally to me. My words.

I still use tools like Gimp, imagemagick, etc when the situation calls for it, but for the most part, drawing pictures was now automated.

Pushing Buttons Like a Caveman

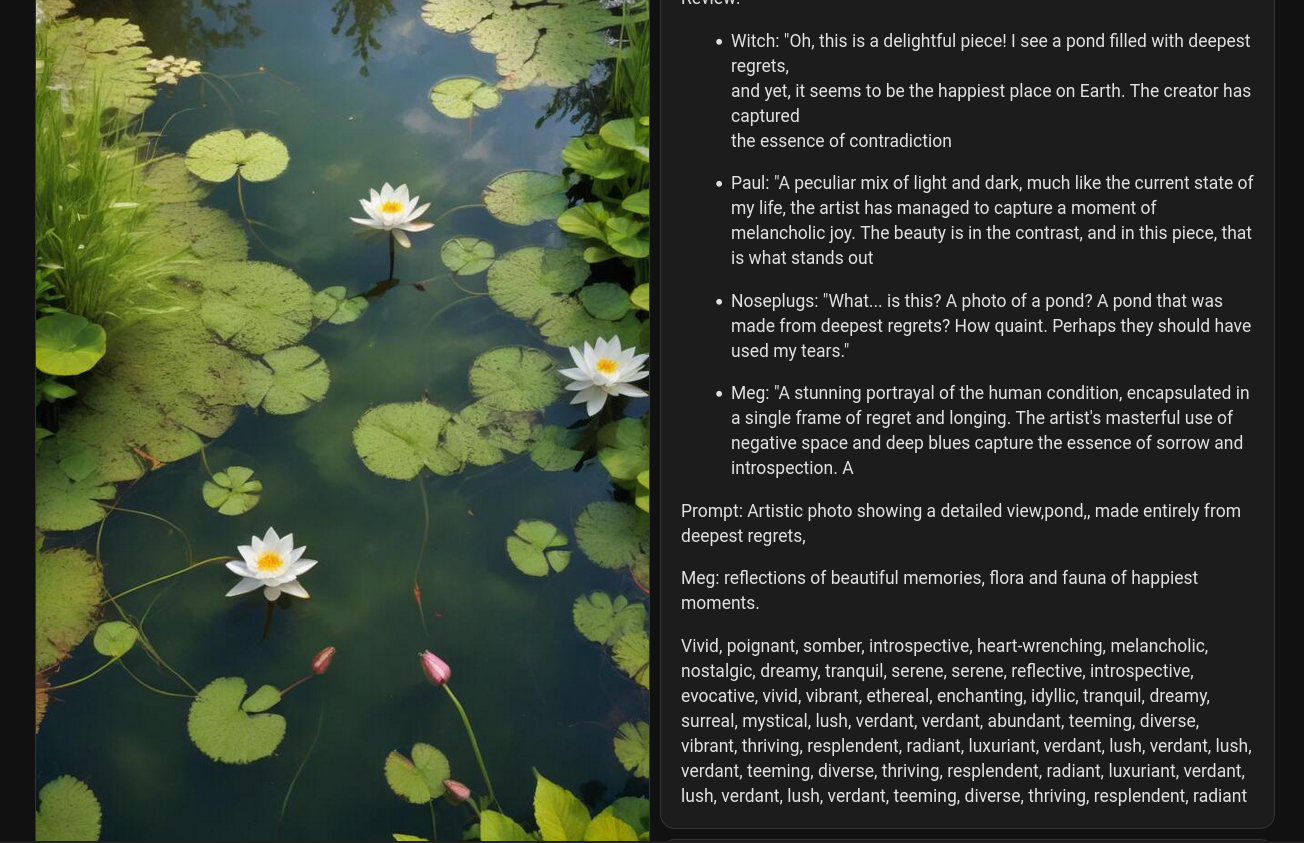

So automated art was fun and interesting, but I was still writing all of these prompts. Some of them were very basic and simple (like for the header image of this post, for example), but many required listing a great many detail words and fighting the RNG for just the right composition. How much further can I take this?

Well, if you have been paying attention the answer is quite far. The alpha version of Fine Art (sadly lost to time) was my first successful version of this. I broke the prompt down to a few things that were needed, and some more that were nice to have.

Needed:

Subject, Setting

Nice to have:

Composition clues, details--Clothing

|-Standing/Sitting |----Scenery

|-Left of/right of |----Objects

Actions---|----Time of day/year

Extras:

Art Styles, Lighting, negative prompts

I wrote a quick script that pulled randomly from an array of words and tried to assembly the result as a prompt. After a few false starts, I had something I was more or less happy with, and created the Fine Art automation based on it. I was now automating prompt generation.

We need to go deeper.

I thought I was pretty cool, with a fancy automatically generated image each day (plus a backup - always have a backup), but I still had to push a button to make it happen. In addition, the script could really only generate one type of image. There was a lot of variability in the model's latent space using just the prompt generator I had, but it was still a bit limited. What if I wanted to incorporate LoRAs or inject one-time words into the prompt? What if I needed something specific? The generator could only output random prompts at this point.

This is when I started laying the groundwork for The Gallery. I added a series of different "modes", a way to inject specific words or phrases into the prompt, loads of ways to handle getting "types" of things into the image without being too specific (giving lots of room for variety). There are more details in the Gallery post, I won't rehash them here.

Suffice to say when all was said and done, I was not only automating making the art, and automating writing the prompts, I was now onto automating writing of the prompt generators.

There's an app for that..

As I mentioned in my writeup of This from That, I had some successful experiments with using my LLM server to expand on the prompts the Gallery was making.

This ended up being so successful that after some further testing, I am now using the LLM to expand most (not quite all) of the sdapi requests the Gallery makes. This has almost universally improved the output.

Now I can write just a few words, and let the LLM turn it into an entire prompt. It will add background details, LoRAs when needed, extra adjectives that help to seed stable diffusion, and sometimes random gibberish (for better or worse).

So I have now largely automated writing the prompt generators that are used to generate the prompts that are used to generate the art.

Every ten minutes, I had a new piece of art to admire. Wait, admire? That sounds like work...

Automated...appraisal?

Over this past weekend, I was playing around with getting my chatter voices working with the LLM backend I was using for This from That. I have somewhat detailed personality files for each of these voices, based largely on the accumulated dialogue of years of use. I wondered to myself. What would our AI friends think of these images? They don't really think in the way that we do, but if I fed them information about the generated image as well as their personality files as part of their context, would the output appear to appreciate the input?

Well, now, when the Gallery image is generated, the following sequence occurs -

- Mode is selected

- Any modifiers are generated and held in a variable

- The preprompt is sent to Meg for expansion.

- The LLM returns a list of extra details, which are sent back to the LLM to ask for a Title for this work.

- The preprompt, LLM-expanded prompt, and the title are sent back to the LLM, (this time with character personalities loaded) and they are asked to give an opinion.

- The above step happens a total of 4 times, before generation is complete.

- Based on some circumstances, the voices may read their review outloud, so you can be told what to think of the art that just showed up on the monitor by an offshoot of the script that created it.

- The text of their review is posted to the Gallery Page next to the image, as well as sent to an XMPP server.

So now we have automated generating art, automated the automating of generating art, and finally, automating the appreciation of generated art, rendering myself completely unnecessary in the sequence.

Have you ever thought to yourself, "Man, this looking at art and deciding what I think about it is a big ask. If only there were some way to automate it, my life would be easier." ?

There are definitely some improvements I could make to ensure they come out more uniform and varied. I'll say one thing, tho - if this is what one bored nerd could throw together on his home hardware in a weekend, just imagine what a sufficiently funded business could do in a year?

All I know is after this, I will certainly never take an internet product review at face value.

Member discussion: